BrainBox AI CTO on the future of AI and sustainability

Everyone’s talking about generative AI tools like ChatGPT and DALL-E - but what exactly is generative AI? How does it differ from automated AI? And how can the two work together to tackle worthy issues like climate change? We sat down with BrainBox AI co-founder and CTO, Jean-Simon Venne, to interview him on generative vs automated AI, how they could work in tandem to reduce commercial building emissions, and the responsibility of tech companies to help regulate this burgeoning beast.

To kick us off, could you briefly describe what AI is?

Well, firstly, AI is a really broad term. On a basic level, it describes a machine that’s given a set of rules or parameters that it follows to reach a conclusion in just a few seconds. So, an outcome that would have taken us hours or days to come up with on our own can now be done in a fraction of the time. This type of traditional AI has been around for a long time, but what’s really kicked in within the last 10 years or so is deep learning.

Deep learning reproduces the same kind of architecture that we have in our brains, allowing machines to make decisions the way that humans would. It can even recognize images and process language. All you need to do is throw a bunch of data at it and it’s able to process it, analyze it, learn from it, and reach a conclusion. And it does this continually, getting better all the time.

I would say deep learning models are a pretty recent development because it’s only been within the last decade or so that we’ve finally had the computing power to host this kind of technology. Also, memory is now more affordable. These two things have truly helped open up the space for AI to grow and flourish. In fact, it’s opened up the space so rapidly that people worry we won’t be able to manage it. And now, with generative AI on the scene, it’s developing even faster than anyone could have imagined.

Can you tell us a little more about what makes generative AI so prolific and how it differs from automated AI?

What differentiates generative AI from automated AI is that, where automated AI is controlled, generative AI is creative.

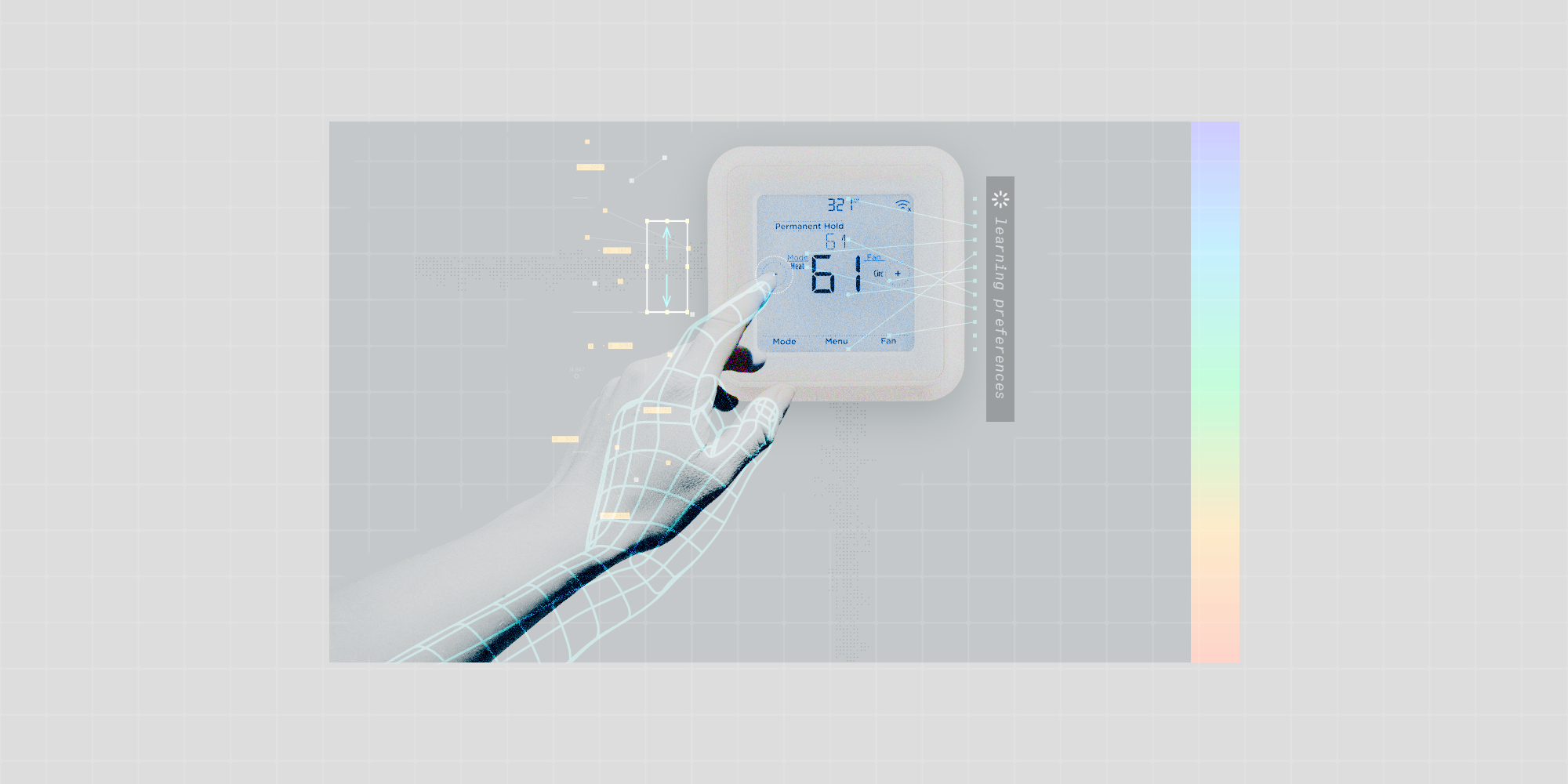

What I mean by this is that automated AI only operates within the narrow set of guidelines that we give it, making it very good at optimizing something that already exists. For example, BrainBox AI uses automated AI to optimize HVAC systems. Our models are trained to be experts on heat pumps, ventilators, damper valves, etc. They have no clue what a Land Rover is. And they don’t need to know because their job is very specialized, and it has nothing to do with Land Rovers.

Generative AI, on the other hand, is designed to create something entirely new from massive amounts of data and parameters. It’s basically made to answer whatever question you have on your mind. It needs to know about history, biology, chemistry, the complete works of Shakespeare – it needs to know everything about everything because it never knows what you’re going to ask it.

So, even though these are two very different systems, they both use deep learning to find the optimal solution to a problem or question. In fact, the models of generative AI that we have right now, like ChatGPT4, are the largest models of deep learning that we have today. To put it into perspective, the first GPT launched by OpenAI in 2018 used 117 million parameters, the second version was released the next year with 1.5 billion parameters, and the third had 175 billion parameters. Pretty much no one was thinking that we could reach a trillion. Then GPT4 came around and it’s reached it; meaning that these systems can process more data than we ever imagined – and they can learn incredibly complex things from it.

With generative AI evolving at such breakneck speed, it’s easy to see why people say that those who aren’t using it will quickly fall behind. Do you believe this? And if so, how is generative AI being used within BrainBox AI?

Well, I’d be lying if I said I wasn’t playing with it to generate my PowerPoint slides. But more seriously, we’re in the process of evaluating the use of generative AI for generating test and use cases on the software development side, which make the code more effective and efficient. These cases are very time-intensive and software developers don’t often enjoy preparing them, but with these new generative AI models you can just press a button and your use cases can be generated in seconds. That’s around half of a developer’s time saved and, for a company, it’s just doubled your productivity.

So, in this way, yes, I believe that any company not adopting these tools will quickly be left behind.

So clearly generative AI is already changing the way we work, from coding, to use cases, to PowerPoint slides. It’s also creating music, movies, and art. Do you think generative AI, with all its creative applications, could also significantly alter more rigid industries such as the real estate sector?

For sure. I think that in the future, generative AI could have an important role to play in, for example, planning and designing new buildings, especially more sustainable buildings. For instance, if you’re wanting to construct a building that optimizes energy use, generative AI could help design the building, the different floors, and the work- or living spaces in a way that’s planet friendly.

It might also help engineers create more sustainable building operation systems. Maybe they could use it to help design a new heat pump that consumes less energy or a new ventilation system that optimizes air quality.

Reducing emissions is clearly a major upside to the application of AI. Do you think that in the future both generative and automated AI could work together to address major issues like sustainability?

In short, yes. Actually, there’s an interesting junction possibly arising between generative and automated AI in the real estate space, where generative AI could be used to assess a new building and identify opportunities for the implementation of automated AI software that can optimize energy efficiency and reduce emissions. So, once generative AI has been used to scope out a building and suggest various controlled AI applications, automated AI can take over and make sure the building is running at optimal capacity. Now, if we apply these kinds of symbiotic use cases on a large enough scale, there’s a real potential for a positive impact on climate change.

Honestly, I don’t think any of this is very far off given how fast AI is evolving. In fact, the other day I was thinking about how, when BrainBox AI was first starting out, I said to my team of 10 at the time, ‘Let’s not wait for governing bodies to agree on how we’ll save the planet from climate change. Let’s help. Let’s start now. Let’s build AI technology so that it has the capability to save the planet.’ Now, today, I look at how far AI has come, and I think, ‘Wow. This is it. We’ve reached the pivotal point. AI will save the planet. So, let’s make damn sure we do it right.’

AI certainly has a lot of potential for good. But in the face of AI’s rapid evolution, what costs, concerns, and questions are cropping up?

I think what we see as costs can also be seen as benefits. The first cost is that some tasks that used to be done by humans are now being done by AI. However, this is also saving people a lot of time and money.

Another cost / benefit is that AI is evolving much faster than we thought. If you’d told me two years ago that AI would be where it is today, I would have said ‘Not a chance. Maybe in my son and daughter’s lifetime, but certainly not in mine.’ But here we are. It’s happening today. The genie is out of the bottle and we can’t push it back in. So now we must figure out how to adapt to it. We also need to start answering some pretty important questions, like how do we come up with guidelines on how to use this technology?

These are good points. In fact, a central concern surrounding generative AI is that it should be regulated to prevent ethical issues of discrimination, privacy infringement, misinformation, and the generation of inappropriate or offensive content. Do you think we’ll ever be able to make generative AI behave? And how can we safeguard against its misuse?

Those are the big questions. Can we regulate generative AI? If so, how? Will it be voluntary? And can we trust companies when they say they’re regulating their use of generative AI?

I think one major issue is that the development of this kind of technology is going too fast to even have these debates. It’s going too fast for governing bodies to keep up. And it’s going too fast for the tech industry to decide how to approach it.

That’s why we need businesses with experience in AI to step in and contribute to the debate. These companies need to take the lead in recommending the legislative and ethical barriers that need to be applied. If that doesn’t happen, it will be extremely difficult for the government to catch up and implement regulations.

In this way, I think companies like ours are in a perfect position to advise on how to regulate AI. We’ve been in the AI game for six years now. We know what kinds of issues and problems come up when implementing AI and we’ve invariably had to look at the ethical side of it too – the rights of the customer, data privacy and security, etc. So, I really feel that companies with an already extensive working knowledge of AI should come together, agree on guidelines, educate others, and help pave the way for the safe and effective application of AI that respects our human rights and values.

You know, it’s funny. In the past, I’ve always complained that AI wasn’t developing fast enough. Well, now it is. It’s here. It’s evolving. It’s accelerating. And it’s not stopping. We can’t just press pause to catch our breath and get used to it. We need to adapt at the speed at which it’s evolving. And we need to do it responsibly.